Many people find themselves stumped by the so-called Blue-Eyed Islanders puzzle . There is also much controversy over its supposed solution. I'm going to analyze the problem and the solution, and in the process, explain why the solution works. To begin, let's modify the problem slightly and say that there's only 1 blue-eyed islander. When the foreigner makes his pronouncement, the blue-eyed islander looks around and sees no other blue eyes, and being logical, correctly deduces that his own eyes must be blue in order for the foreigner's statement to make sense. The lone blue-eyed islander thus commits suicide the following day at noon. Now comes the tricky part, and the source of much confusion. Let's say there are 2 blue-eyed islanders, Mort and Bob. When the foreigner makes his pronouncement, Mort and Bob look around and see only each other. Mort and Bob thus both temporarily assume that the other will commit suicide the following day at noon. Imagine their chagrin...

Comments

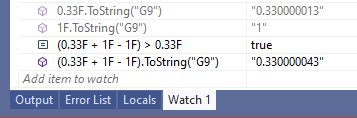

That's not true on .Net Core/.Net 5+. When I run `(0.33F + 1F - 1F).ToString()` there, the output I get is "0.33000004".

All floating-point numbers have a limited number of significant digits, which also determines how accurately a floating-point value approximates a real number. A Single value has up to 7 decimal digits of precision, although a maximum of 9 digits is maintained internally.

If you're claiming that the expression that I quoted produces different results on your runtime, then great, you just proved my point again: that floating point is a quagmire because different optimizations and compilations of floating point code can produce different results.